Effective exam preparation is influenced by a variety of factors, including socio-demographics, motivation, pedagogy, and environmental context (Doğanlı & Çelik, 2021; Hosen et al., 2024; Salloum et al., 2023; Zerdani & Lotfi, 2021). Prior research has addressed many of these elements including the use of mobile apps. Mobile apps have become a prominent and effective means for language acquisition, offering learners skill development, flexibility, engagement, and opportunities for self-directed study. Research consistently shows that mobile apps can enhance language skills, particularly vocabulary, listening, and speaking, while also increasing learner motivation and autonomy (Gangaiamaran & Pasupathi, 2017; Kannan & Meenakshi, 2023; Lim & Toh, 2024; Lin & Lin, 2019). Many mobile apps focus on isolated vocabulary, speaking, and writing rather than content, theory and frameworks (Lim & Toh, 2024). As mobile device use becomes ubiquitous among college students (Gierdowski et al., 2020), with smartphone engagement rising to five hours daily (Dong et al., 2024; Howarth, 2023), researchers have examined the relationship between mobile app use and student performance. Mobile apps are widely recognized for increasing student engagement and motivation using gamification and learning actives in language proficiency courses (Gou, 2023; Klimova, 2019). This demonstrates that while the general relationship between technology and learning is recognized, the specific use case of using the publisher produced mobile app in the context of modern pedagogy with the professor leveraging this technology to support student constitutes a significant research gap, as the mechanisms of concept acquisition in content-heavy courses like marketing, via mobile apps, remain underexplored.

This study investigates the academic impact of mobile textbook apps specifically marketed for exam preparation in non-language disciplines. Although mobile learning apps are widely available, few studies have examined their effectiveness in content-intensive subjects like marketing. Examining these apps in content-heavy courses like marketing is crucial for advancing marketing education, as it offers empirical insights into leveraging widely adopted technology to improve learning outcomes where deep content understanding is paramount. The originality of this research lies in its focus on instructor-led promotion within this specific, under-researched context. Informed by Bandura’s Social Cognitive Theory (2001), Mayer’s Cognitive Theory of Multimedia Learning (1999), and Mayer’s e-Learning Theory (2017), we explore how in-class modeling of a mobile exam preparation app influences students’ engagement and academic outcomes. To address this gap, we examine a marketing course where a mobile exam preparation app was promoted by the instructor, comparing student performance with and without the app. This directly addresses the research gap by providing empirical evidence on the academic impact of such apps in a content-driven, non-language discipline, demonstrating a clear path for educators to enhance student learning through targeted technological integration.

The research focuses on a large undergraduate Principles of Marketing course where students were given access to McGraw-Hill’s mobile Sharpen app (Carnegie Classification of Institutions of Higher Education, 2024). The instructor incorporated brief, consistent demonstrations of the app during lectures between the second and third exams to encourage adoption. Using comparative analysis across two semesters, one with app promotion (Fall 2023) and one without (Spring 2023), this study explores the relationship between faculty promotion of mobile apps and student exam performance.

LITERATURE REVIEW

This literature review synthesizes theoretical and empirical perspectives on mobile learning, focusing on how mobile applications influence student engagement and academic performance. It is organized into three sections: (1) theoretical frameworks underpinning mobile learning, (2) empirical statistics and studies on mobile app use in education, and (3) a contextual overview of mobile exam preparation applications.

THEORETICAL FRAMEWORK

Mobile learning applications can be understood through established learning theories that explain how students engage with technology. Bandura’s Social Cognitive Theory (2001) posits that learners acquire knowledge through observation, imitation, and social interaction. In classroom settings, when instructors visibly model the use of mobile learning apps (e.g., demonstrating a flashcard or quiz feature), students are more likely to adopt and engage with the tool. This modeling effect is particularly relevant in marketing education, where instructor behavior can shape classroom norms. In our context, the instructor’s active promotion and in-class demonstrations of the Sharpen app represent modeling. This instructor promotion is expected to positively influence students’ self-efficacy regarding app use and their outcome expectations, thereby increasing student engagement with the app (e.g., frequency of use, utilization of features like quizzes and flashcards). Higher engagement, in turn, is theorized to improve exam performance by providing more opportunities for practice and reinforcement.

Complementing this, the Cognitive Theory of Multimedia Learning (Moreno & Mayer, 1999) focuses on managing cognitive load in digital environments. Effective mobile apps reduce extraneous information and use multimedia elements (e.g., visual, audio, interactivity) to support deep learning without overwhelming the learner, aligning with Mayer’s principles of reducing extraneous load and promoting germane processing. The Sharpen app’s design features, such as overview videos, chapter summaries, exam-prep quizzes, step-by-step practice problems, and flashcards, are designed to align with these principles. By presenting information efficiently and interactively, these features aim to optimize concept acquisition/understanding in content-intensive marketing courses. E-Learning Theory (Mayer, 2017) integrates cognitive science and instructional design, offering principles for structuring effective digital learning experiences. When instructors guide students in using pedagogically sound apps, learning efficiency and engagement can improve. By guiding students in how to use these tools and embedding them into coursework, educators can enhance both engagement and performance. In summary, these theories suggest the educational impact of mobile apps is maximized when instructional design (to manage cognitive load) and social factors (instructor and peer influence) work in tandem. This integrated perspective underpins our theoretical expectations that the overall educational impact of the mobile app on exam performance is maximized when both effective instructional design (CTML/e-Learning principles embedded in the app) and social influences (SCT’s modeling effect through instructor promotion) encourage student utilization and deep learning.

MOBILE APPS AND LANGUAGE AQUISITION

Research on mobile applications for language learning has consistently shown that these tools can enhance language proficiency across multiple domains, including vocabulary acquisition, listening, speaking, and overall communicative competence (Gangaiamaran & Pasupathi, 2017; Kannan & Meenakshi, 2023; Lim & Toh, 2024; Lin & Lin, 2019). Lim and Toh (2024) conducted a systematic review highlighting that mobile apps can increase learner motivation and autonomy while supporting interactive and self-directed learning. Similarly, Kannan and Meenakshi (2023) emphasized the role of language-immersion apps in providing contextualized learning environments that foster practical language use. Lin and Lin (2019), through a meta-analysis, demonstrated that mobile-assisted vocabulary learning significantly improves learners’ retention and application of new words, while Gangaiamaran and Pasupathi (2017) reported widespread adoption of mobile apps in classrooms, particularly for supplemental practice outside traditional instruction.

Despite these positive outcomes, the literature identifies notable limitations in current mobile app research for language learning. Many commercial applications focus predominantly on isolated vocabulary practice rather than integrating language use within meaningful contexts, and they often lack adaptive or explanatory feedback that could tailor learning to individual needs (Gangaiamaran & Pasupathi, 2017; Lin & Lin, 2019). Research examining the impact of mobile-assisted learning on academic achievement further supports their potential, but these studies remain largely confined to language skill development (Gou, 2023; Klimova, 2019). Klimova (2019) found that mobile learning can enhance students’ performance in structured learning environments, while Gou (2023) highlighted improvements in both language proficiency and general academic outcomes among college students, reinforcing the practical value of integrating mobile technologies into educational contexts.

Although these studies collectively indicate that mobile apps can support language proficiency and academic performance, a significant gap remains in the literature. Most research has focused on language acquisition in isolation, with limited attention to content-heavy, theory-driven college courses where understanding frameworks, models, and applied knowledge is critical (Kannan & Meenakshi, 2023; Lim & Toh, 2024). Few studies have examined how mobile applications can be leveraged to support deep content learning in traditional higher education settings, particularly for courses that integrate complex concepts alongside language development. Addressing this gap would extend the practical and theoretical relevance of mobile-assisted learning beyond language practice to comprehensive content mastery (Gangaiamaran & Pasupathi, 2017; Gou, 2023; Klimova, 2019; Lin & Lin, 2019).

EMPIRICAL STATISTICS AND STUDIES ON MOBILE LEARNING

Building on the theoretical foundations of social cognitive theory and multimedia learning, a growing body of empirical research has examined the prevalence of student mobile device ownership and usage. Much of this work, however, emphasizes general measures of device ownership and hours spent on devices rather than specific applications for academic purposes. In particular, limited research has systematically tracked the use of mobile textbook applications.

Industry reports highlight the ubiquity of mobile learning practices. For example, EDUCAUSE surveys show that approximately 81% of students reported using smartphones for learning at least once per week in 2021, with institutional apps such as Canvas Mobile, UCF Mobile, and Zoom ranking among the most frequently used. Canvas Mobile alone experienced a 91% increase in usage during this period (deNoyelles et al., 2023). The broader market for mobile educational technology is also expanding rapidly, with projections estimating growth from $7.5 billion in 2024 to $83.3 billion by 2034 (Revankar, 2025). Yet despite the growth of the industry, app engagement remains limited. Reports indicate that approximately 75% of downloaded mobile applications are used fewer than ten times before being abandoned (Eser, 2025).

National statistics further underscore this gap. NCES provides robust data on online course enrollment and student access to digital resources, including e-textbooks and open educational resources. However, NCES has not yet published statistics specifically addressing the adoption or pedagogical impact of mobile textbook applications, further supporting the need for empirical inquiry into this underexplored domain.

Independent researchers have begun to address this gap by investigating the effects of mobile learning applications on student engagement, satisfaction, and academic performance. A systematic review by Rangel-de Lázaro and Duart (2023) found that mobile tools in online higher education significantly enhanced interaction, content creation, and collaboration between students and instructors. These findings suggest that mobile apps are particularly effective when used to supplement traditional instruction, offering students opportunities for self-paced review and practice. Similarly, Tamilarasi (2023) noted that educators increasingly recognize mobile devices as accessible tools for learning, communication, and academic support.

In discipline-specific contexts, mobile apps have demonstrated promise. For example, Voshaar et al. (2022) reported that first-year accounting students using gamified mobile learning apps showed higher retention and greater satisfaction compared to those employing traditional study methods. Importantly, the study emphasized the role of instructor involvement, noting that modeling app usage and embedding it into coursework were critical to sustaining engagement.

Taken together, the empirical literature points to both opportunities and gaps. While there is strong evidence for the widespread adoption of digital devices, digital materials, and general education apps, significantly less evidence exists regarding mobile textbook applications. Questions remain about the frequency of student use, the instructional conditions that support sustained engagement, and the extent to which mobile textbook apps can influence learning outcomes in higher education.

DESCRIPTION OF APPS (CONTEXTUAL BACKGROUND)

Given this interest, many educational apps have been developed. A variety of mobile apps are available for exam preparation, ranging from publisher-developed tools like McGraw-Hill’s Sharpen to third-party platforms such as Quizlet and Anki. Table 1 compares these apps based on features such flashcards, videos, practice exams, and student downloads. The McGraw Hill Sharpen app was selected for this study due to its alignment with course content.

MCGRAW HILL SHARPEN APP

The McGraw Hill Sharpen app is a relatively recent mobile and web-based study platform developed to support undergraduate students in both exam preparation and ongoing engagement with course material. Introduced in Fall 2022 in response to shifts in student study practices—many of which were accelerated by the COVID-19 pandemic—Sharpen combines vetted academic content with short, interactive, and accessible learning tools (McGraw Hill, 2022).

The app’s core features are designed to align with contemporary learning preferences. These include brief video explanations, modeled after short-form media formats, which summarize chapter concepts and are paired with digital flashcards. For quantitatively oriented disciplines, Sharpen provides step-by-step guided solutions, enabling students to practice problem solving in a structured manner. The app also integrates gamified progress tracking through personalized study paths, dashboards, and achievement badges, all intended to sustain motivation and enhance self-monitoring of learning progress (McGraw Hill, n.d.).

From a pedagogical perspective, Sharpen offers several benefits. Its formative assessment tools, which range from introductory to exam-level quizzes, allow students to identify gaps in their knowledge and build confidence gradually as they prepare for higher-stakes assessments. Faculty have also reported using the app to support flipped classroom approaches, asking students to preview material before class so that face-to-face time can be devoted to deeper application and discussion. Moreover, because Sharpen delivers content in multiple modalities—videos, text summaries, quizzes, and flashcards—it supports accessibility for students with diverse learning preferences and study habits.

Nevertheless, potential drawbacks must be acknowledged. These include subscription costs, which may present access barriers for some students, as well as the potential for “digital fatigue” among learners already immersed in technology-mediated environments. Despite these challenges, anecdotal feedback from both students and faculty suggests that Sharpen can increase motivation to study by making high-quality, course-aligned resources easily accessible on mobile devices.

Our study seeks to move beyond anecdotal evidence to examine empirically the impact of Sharpen on student learning outcomes, engagement, and confidence in marketing education.

Integrating Social Cognitive Theory (SCT) and Cognitive Theory of Multimedia Learning (CTML), we hypothesize that instructor-led promotion of the mobile exam preparation app will significantly improve student exam performance in marketing courses through two measurable pathways: (1) SCT pathway: instructor modeling increases student engagement (measured as frequency of use and feature utilization), and (2) CTML pathway: the app’s pedagogical design enhances concept acquisition (measured through performance on app-based assessments), with both engagement and concept acquisition serving as mediators between instructor promotion and final exam outcomes. Instructor promotion (independent variable): Measured by frequency of in-class demonstrations (at least 3 per course module), quality of implementation (rated 4+ on 5-point rubric by independent observers), and consistency of encouragement throughout the semester.

-

Student engagement (mediator 1): Operationally defined as:

-

Frequency of use: ≥3 logins per week

-

Feature utilization: ≥70% of core features accessed weekly

-

Session duration: ≥15 minutes per session on average

-

-

Concept acquisition (mediator 2): Operationally defined as:

-

Accuracy on app-based knowledge checks: ≥80% correct responses

-

Progress through learning modules: ≥90% completion rate

-

Application of concepts in scenario-based assessments: ≥75% proficiency

-

-

Exam performance (dependent variable): Measured by final exam scores (percentage correct) on standardized assessments administered at course completion, controlling for prior academic performance.

To test this hypothesis, we conducted a quasi-experimental design to examine the influence of instructor-promoted mobile textbook applications on exam performance among undergraduate business students.

METHODOLOGY

This design involved a comparison of student performance across two distinct semesters: Spring 2023 and Fall 2023. The control group consisted of 674 students enrolled in the Spring 2023 semester, during which instructors did not promote any mobile applications, and students received traditional study instruction. The treatment group comprised 845 students from the Fall 2023 semester. In this semester, the instructor actively demonstrated and encouraged the use of McGraw-Hill’s Sharpen+ mobile app during class sessions. Specifically, the instructor incorporated brief, consistent demonstrations of the app during lectures between the second and third exams in Fall 2023 to encourage adoption. Students were encouraged to integrate key features like quizzes and flashcards into their regular study routines. Participants were undergraduate students in a Principles of Marketing course. A defining characteristic of this quasi-experimental design is the non-randomized assignment of participants to the control and treatment groups. Students were naturally enrolled in either Spring or Fall semester, rather than being randomly assigned to an intervention or control condition.

The primary data source for this study consisted of student exam scores, which served as a direct measure of academic performance. All students completed the same standardized exams, composed of multiple-choice questions designed to assess their understanding of core marketing concepts taught through the semester. To account for potential confounding variables and to statistically control for individual differences that might influence exam outcomes, we collected demographic data from institutional course records. These variables, which were included in the multiple linear regression model were: gender, age, ethnicity, cumulative GPA, academic major, and years in school.

The inclusion of these factors allowed for statistical control of individual differences that might influence exam outcomes, thereby aiming to provide a more accurate and equitable assessment of the app’s educational impact and helping to isolate the effect of the mobile exam preparation app on student performance. For instance, GPA was found to be a strong and significant predictor of exam performance both semesters, although its effect was more pronounced in the Spring semester (without the app), suggesting the app might have helped narrow performance gaps in the Fall.

Incorporating these factors into our analysis allowed for statistical control of individual differences that might influence exam outcomes. By adjusting for these demographic characteristics during the modeling process, the analysis aims to isolate the effect of the mobile exam preparation app on student performance. This approach ensures a more accurate and equitable assessment of the app’s educational impact.

The study was approved by the Institutional Review Board (IRB), ensuring compliance with ethical standards for research involving human participants. All participants provided informed consent prior to data collection. Demographic and academic variables were collected to support the study’s analytical framework. These variables were used solely for research purposes and were de-identified during analysis to protect participant confidentiality. The inclusion of these variables was justified by the study’s research question and was conducted in accordance with IRB guidelines.

Our analytical approach aligns with current best practices in educational research design, as outlined by the Institute of Education Science (IES, 2013). These guidelines emphasize methodological rigor, transparency, and the integration of both descriptive and inferential statistical techniques to ensure robust and interpretable findings. We employed a multi-step analytical strategy:

-

Descriptive Statistics: We began by summarizing exam scores and demographic variables (e.g., gender, GPA, major) across both semesters. This provided a baseline understanding of the sample and allowed for initial comparisons.

-

Distribution Testing: To assess whether score distribution differed significantly between groups, we used the Kolmogorov-Smirnov (K-S) test, a non-parametric method suitable for comparing cumulative distributions.

-

Inferential Analysis: An independent sample t-test was conducted to compare mean exam scores between the app-exposed group and the non-exposed group. A one-way ANOVA was used to examine performance differences across multiple demographics subgroups.

-

Regression Modeling: We constructed a multiple linear regression model with exam score as the dependent variable. Independent variables included semester (Spring vs Fall), gender, age, ethnicity, GPA, major, and academic year. This model allowed us to isolate the effect of app exposure while controlling for potential confounding variables, following recommendations for causal inference in educational research (NCES, 2024).

-

Exploratory Cluster Analysis: Finally, we conducted a cluster analysis to identify patterns of student performance and engagement. This unsupervised learning technique helped reveal latent groupings based on exam outcomes and app usage behavior, offering insight into differential impacts across student subgroups.

All analyses were performed using SPSS, with statistical significance set at p < 0.05.

RESULTS & ANALYSIS

Descriptive Statistics

Overall, students in Fall 2023 (with the app) outperformed those in Spring 2023 on exams. Descriptive statistics revealed that students in the Fall 2023 semester (treatment group) had a higher mean exams score (M = 76.76%, SD = 0.1198) compared to those in Spring 2023 (control group; M = 74.86%, SD = 0.1390). Demographic characters were comparable across groups, with no significant differences in GPA, gender, or academic year.

Distribution Testing

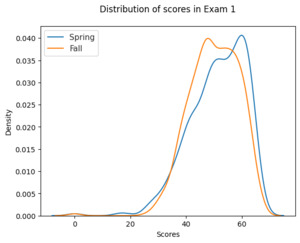

The K-S test indicated a significant difference in distribution of exam scores between the two semesters (p < .05), suggesting a shift in performance patterns potentially associated with the intervention. The distributions of students’ scores on Exam 1 compared in spring (M = 51.33, SD = 9.89), and fall (M = 50.07, SD = 8.77) was found to be skewed towards the high end, not following a normal distribution (see Figure 1). On average, scores in spring are slightly better than in fall, although variability is higher. Nonetheless, both distributions are close on average to each other.

Regression Analysis

A multiple linear regression model including semester, gender, age, ethnicity, GPA, year in school as predictors was performed on the Spring and Fall dataset.

ΔPerformancei= β0+β1(GPA)i+β2(Year)i+β3(Gender)i+β4(Ethnicity)i+εi

where is a dummy variable that equals to 1 for students treated in Fall; 0 for control students in Spring;

is the change in exam performance in Fall compared to Spring;

is the difference in treating and controlling students’ GPA;

is the difference in treating and controlling students’ YearIn School (e.g., freshman, sophomore);

is the difference in treating and controlling students’ Gender;

is the difference in treating and controlling students’ Ethnicity.

Table 1 presents the estimated coefficients from separate multiple linear regression models for the Spring and Fall semesters, examining the influence of student characteristics on exam performance.

Interpretation

Intercepts. The baseline performance (i.e., predicted exam score when all predictors are zero) was significantly higher in the Fall semester (112.6) compared to Spring (89.2), suggesting a general performance boost potentially associated with the mobile app intervention.

GPA. GPA was a strong and significant predictor of exam performance in both semesters. However, its effect was more pronounced in Spring (β = 22.9) than in Fall (β = 13.5). This may indicate that in the absence of structured technological support, students with higher GPAs relied more heavily on their academic strengths. In contrast, the app may have helped narrow performance gaps in the Fall.

Year in School. This variable was not a significant predictor in either semester. The slight negative effect in Spring and the slight positive effect in Fall may reflect transitional academic or social factors, such as students adjusting to new schedules or responsibilities.

Gender. Gender has a significant negative effect on performance in both semesters, with a stronger impact in Spring. This suggests that gender-related disparities in academic outcomes may be more pronounced in traditional learning environments and slightly mitigated when supportive technologies are introduced.

Ethnicity. Ethnicity was not a significant predictor in Spring but had a significant negative effect in Fall. This raises concerns about equitable access and benefits from mobile learning tools. It may reflect disparities in digital literacy, access to devices, or external responsibilities that disproportionately affect certain student groups.

Implications

These findings suggest that the predictors of academic performance vary across semesters, potentially due to differences in instructional design, student engagement, or the presence of educational technologies. The reduced influence of GPA and gender in the Fall semester may indicate that the mobile app helped level the playing field for some students. However, the emergence of ethnicity as a significant negative factor in Fall highlights the need for equity-focused implementation strategies. Instructors should consider providing additional guidance and support for students who may face barriers to fully engaging with mobile learning tools. This includes addressing digital literacy gaps, offering culturally responsive instruction, and ensuring that all students understand how to effectively use the technology.

ANOVA (exam-specific differences)

To assess whether the implementation of teaching technologies in the Fall semester had a statistically significant effect on individual exam scores, we conducted a series of one-way ANOVA tests comparing student performance across the three exams between the Spring and Fall semesters.

Table 2 presents the F-statistics and corresponding p-values for each exam. The results indicate statistically significant differences in exam scores between the two semesters for all three exams (p < .05). Notably, Exam 2 showed the most substantial difference (F = 34.22, p < .001), which aligns with the timing of the mobile app’s introduction and promotion in the Fall semester.

These findings suggest that the integration of mobile learning technologies may have contributed to improved exam performance, particularly in the middle of the semester when students had time to engage with the app before the second exam.

Cluster analysis (student profiles)

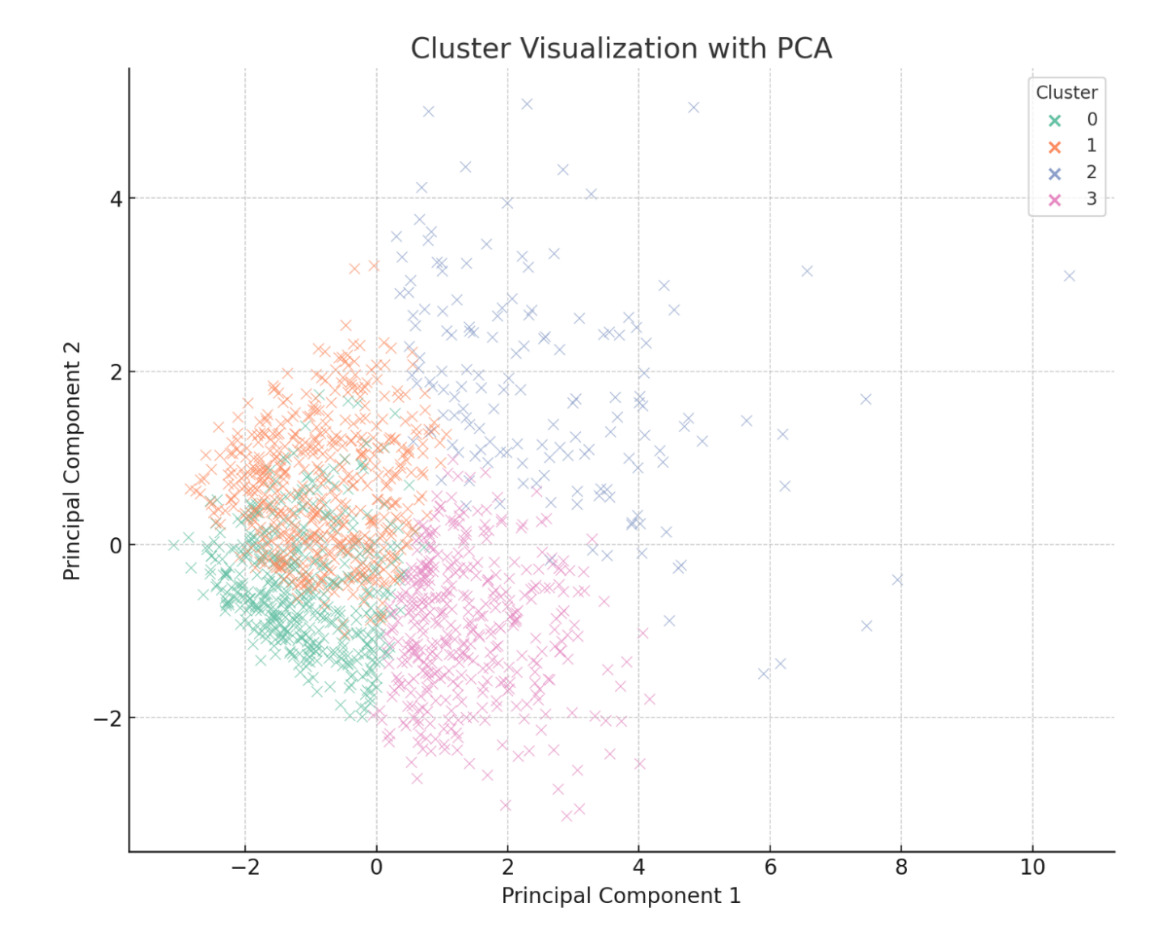

We conducted a detailed cluster analysis to identify distinct student profiles based on academic performance and demographic characteristics. To interpret and visualize these results effectively, we first computed the means of each relevant feature within the clusters, thereby defining clear group profiles. Additionally, we applied the Principal Component Analysis (PCA) to reduce dimensionality, facilitating the visualization of cluster distribution within a two-dimensional space. The average characteristics identified for each cluster are as follows:

Cluster 0: This cluster is predominantly composed of male students who exhibit high exam scores, high assignments, and quiz scores, and possess an average GPA of approximately 3.57. This suggests that students in this group demonstrate strong academic performance and high engagement with evaluative activities.

Cluster 1: This cluster primarily consists of female students who also achieve good exam and activity scores. However, they display a slightly lower average GPA (around 3.40) compared to Cluster 0. The profile of this group indicates solid academic performance with distinct demographic (gender) differences and minor academic variations relative to Cluster 0

Cluster 2: Students in this cluster exhibit the lowest scores on exams, assignments, and quizzes, alongside the lowest average GPA of approximately 2.36. This profile suggests a group facing notable academic challenges and limited engagement in evaluated activities.

Cluster 3: Positioned between the higher-performing and lower-performing clusters, this mixed-gender group has moderate exam scores and an intermediate average GPA of approximately 2.99. Thus, this cluster represents students with mid-range academic performance.

Figure 3 provides a PCA-based visualization of the clusters, depicting how student groups are distributed with a two-dimensional space based on the analyzed characteristics. Each point represents a student, color-coded according to cluster membership.

This graphical representation offers valuable insights. Clusters 0 and 1, reflecting students with relatively higher academic performance, appear close to one another, highlighting similarities in academic and engagement profiles despite differences in gender and GPA. In contrast, Cluster 2, comprising students experiencing significant academic struggles, is distinctly separate, underscoring clear differences in engagement and performance. Cluster 3, with intermediate characteristics, logically appears situated between these extremes.

The cluster analysis further underscores the critical role of engagement. Specifically, students who consistently use the mobile exam preparation app tend to achieve better academic outcomes, suggesting that app effectiveness correlates strongly with usage intensity. This insight holds substantial value for educators aiming to integrate mobile learning into their instructional strategies. Nevertheless, given the quais-experimental nature of this study with non-randomized group assignments, casual interpretations should be cautiously approached. Differences observed across semesters may reflect unmeasured confounding variables such as instructor effectiveness or varying cohort characteristics. To enhance causal inference rigorously, further research could incorporate randomized controlled trials or longitudinal study designs.

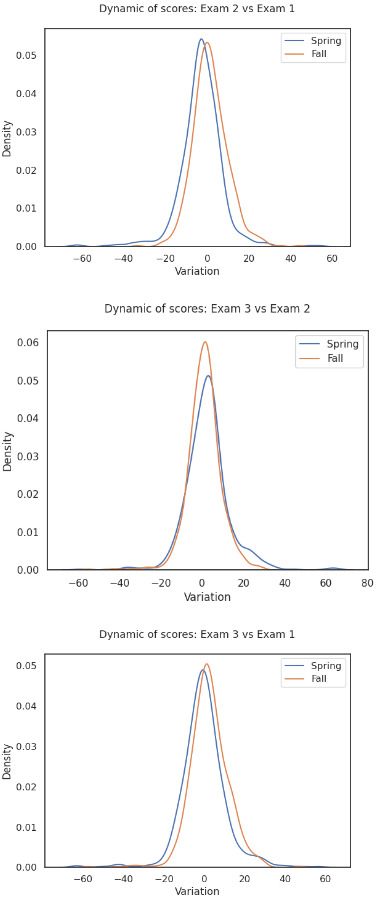

Exam Score Progression Analysis (temporal trends)

To further explore the impact of the intervention over time, we analyzed changes in exam scores within each semester. Specifically, we compared the variation in scores between Exam 2 and Exam 3 (post-intervention period) and between Exam 1 and Exam 2 (pre-intervention period). In the Fall 2023 semester (with app promotion), students showed a greater average increase in scores from Exam 2 to Exam 3 than from Exam 1 to Exam 2, suggesting a positive effect following the app’s introduction. In contrast, the Spring 2023 semester (without app promotion) showed no meaningful change in score progression across the same intervals. This within-group trend supports the hypothesis that the mobile app, when promoted and modeled by the instructor, contributed to improved academic performance over time. It also complements the cluster analysis by showing that performance gains were not only group-specific but also temporally aligned with the intervention.

DISCUSSION AND CONCLUSION

This study provides empirical support for the hypothesis that instructor-led promotion of a mobile exam preparation app can positively influence student performance in a large undergraduate marketing course. The significant improvement in exam scores between semesters, even after controlling for demographics variables, suggests that the app, when integrated into instruction, may enhance learning outcomes. These findings reinforce prior research emphasizing that access alone is insufficient; active faculty engagement is critical to realizing the benefits of education technology (Mafunda & Swart, 2020). These findings align with Bandura’s Social Cognitive Theory, which emphasizes the role of modeled behavior in adoption. The instructor’s in-class demonstrations contributed to increased student engagement with the app, reinforcing its perceived value. This directly supports Hypothesis 1 (H1), which posited that instructor-led promotion would increase engagement, and Hypothesis 2 (H2), suggesting that this engagement would positively influence performance. Additionally, the results are consistent with Mayer’s Cognitive Theory of Multimedia Learning, as the app’s design may have supported cognitive processing through interactive and multimedia elements. This finding aligns with Hypothesis 3 (H3), which predicted that the app’s design would facilitate concept acquisition, and Hypothesis 4 (H4), which linked effective concept acquisition to improved exam scores. The overall significant improvement in exam scores, even after controlling for demographic variables, further supports the integrated Hypothesis 5 (H5), highlighting the synergistic effect of both social (instructor modeling) and cognitive (app design) factors on academic outcomes.

While exam scores served as a direct and quantifiable measure of academic performance in this study, it is important to acknowledge that they primarily capture short-term gains and immediate concept acquisition. They may not fully reflect deeper learning outcomes such as long-term knowledge retention, the development of critical thinking skills, or the ability to apply learned concepts in novel situations. Future research should therefore aim to employ a broader range of assessment methods to capture these multifaceted aspects of student learning.

Interestingly, while GPA remained a significant predictor of performance across both semesters, its relative effect was stronger in the Spring semester. This may indicate that in environments without structured technological integration, academically stronger students are better equipped to succeed independently. The narrowed gap in the Fall semester implies that mobile learning tools, when used effectively, may serve to level the playing field, reducing disparities in academic performance related to prior achievement.

However, the finding that ethnicity had a significant negative effect in the Fall semester suggests that not all students are equally positioned to benefit from mobile learning. This highlights the importance of culturally responsive pedagogy and equitable access to technological resources. It may be that students with lower prior preparation or from certain backgrounds did not or could not take full advantage of the app, due to varying digital literacy or outside responsibilities. Therefore, instructors might need to provide additional guidance or resources for these groups to ensure equitable benefit. These nuanced findings further refine our understanding of Hypothesis 5 (H5), indicating that while a synergistic effect exists, its benefits may not be uniformly distributed, emphasizing the need for equity-focused strategies in implementation.

The cluster analysis further highlights the importance of engagement that students with higher GPA levels and stronger academic engagement benefitted most, while those in lower-performing clusters may require additional scaffolding or personalized support to fully leverage mobile learning technologies. These insights suggest that mobile apps are not universally beneficial in isolation but are most effective when embedded in a broader pedagogical strategy that includes instructor modeling, consistent reinforcement, and integration with class activities.

Implications for Practice

This study fills a critical gap in the literature by demonstrating that in non-language, content-intensive courses, such as undergraduate marketing, deliberate promotion of a mobile study app by the instructor can yield measurable gains in student performance. For marketing educators, these findings present several actionable implications for enhancing pedagogical strategies and preparing students for the complexities of the real world:

-

Strategic Integration for Enhanced Concept Acquisition and Deep Learning: Instead of treating mobile apps as mere optional supplements, educators should view them as strategic tools integral to the learning process. The study’s alignment with Bandura’s Social Cognitive Theory highlights the importance of instructor modeling; when faculty actively demonstrate and encourage app usage, student engagement and performance significantly improve. For instance, an instructor can highlight how to use features like flashcards and quizzes (as in McGraw-Hill’s Sharpen app) to reinforce core marketing concepts. Furthermore, by adhering to Mayer’s Cognitive Theory of Multimedia Learning, apps with well-designed multimedia elements, interactive exercises, and practice tests can support deep learning by managing cognitive load and promoting germane processing. This approach moves beyond rote memorization, encouraging students to actively process and internalize complex marketing theories and frameworks.

-

Fostering Critical Thinking and Knowledge Retention: To foster critical thinking, marketing educators can leverage the practice problems and step-by-step solutions offered by apps like Sharpen. Instead of simply accepting the app’s answers, instructors can challenge students to explain the reasoning behind solutions, analyze alternative approaches, or debate the applicability of a concept in different market scenarios. This can be done through in-class discussions or short assignments that require students to elaborate on app-based practice problems. Similarly, the systematic, self-paced review capabilities of these apps, including flashcards and personalized recommendations for improvement, are invaluable for strengthening knowledge retention over the long term. Educators can assign app activities as pre-class preparation, post-class review, or integrated challenges that requires students to recall and apply knowledge over extended periods, thus mitigating the common issues of short-term learning for exams.

-

Preparation for Real-World Marketing Problems and Applied Skills: While the study primarily measured exam scores related to core marketing concepts; the features of mobile apps can be adapted to prepare students for real-world marketing problems. For example, by using the app’s practice problems as a foundation, instructors can introduce case studies or scenarios that require students to apply theoretical knowledge to practical situations, such as developing a marketing strategy, analyzing consumer behavior, or evaluating market research data. This moves beyond theoretical understanding to practical application, a critical skill in the marketing profession. Educators can encourage students to think critically about how the concepts reviewed in the app would manifest in a business context, fostering an ability to transfer knowledge to professional challenges.

-

Addressing Equity and Personalized Learning: The finding that mobile learning tools can help narrow performance gaps, reducing the stronger reliance of high-GPA students on their inherent academic strengths, underscores their potential to level the playing field. However, the emergence of ethnicity as a significant negative factor highlights the need for equity-focused implementation strategies. Marketing educators must be initiative-taking in providing additional guidance and support for students who may face barriers to fully engaging with mobile learning tools, such as disparities in digital literacy, access to devices, or external responsibilities. This might include offering in-class tutorials, creating inclusive instructors that resonate with diverse student backgrounds, or providing alternative support mechanisms to ensure all students can effectively leverage the technology. The cluster analysis further reinforces this, indicating that students in lower-performing clusters may require additional scaffolding or personalized support to fully benefit from these technologies.

In summary, mobile apps are not universally beneficial in isolation but are most effective when embedded in a broader pedagogical strategy that includes instructor modeling, consistent reinforcement, and deep integration with class activities, fostering not only exam performance by also deep understanding, critical thinking, and practical application for future marketing professionals.

Limitations and Future Research

This study was conducted at a large, research-intensive (Carnegie R1) university, which may limit generalizability to smaller institutions or non-research universities. Additionally, the primary goal was to conduct a comparative analysis to explore the relationship between faculty promotion of mobile apps and student exam performance. By adjusting for demographic characteristics, the analysis aimed to isolate the effect of the mobile exam preparation app on student performance. However, due to the non-randomized group assignments, causal interpretations should be cautiously approached, as observed differences might reflect unmeasured confounding variables such as instructor effectiveness or varying cohort characteristics. To enhance causal inferences rigorously, future research could incorporate randomized controlled trials or longitudinal study designs.

This study relied on self-reported measures of app usage, with students indicating whether they used the app during class, but without providing detailed metrics such as time spent, frequency of use, or task completion. While these self-reports provide a preliminary understanding of engagement, they are limited in precision and may be subject to recall or social desirability bias. Future research should incorporate more objective and granular measures of engagement, including digital traces of app usage (e.g., log-ins, duration of activity, completion of interactive tasks) to better capture how students interact with mobile learning tools.

We could also conduct future research that examined the longitudinal effects of mobile app usage across multiple semesters and disciplines, moving beyond immediate exam performance to access sustained knowledge retention and the development of higher-order thinking skills. This could involve follow-up assessments or performance tasks administered well after course completion. Additionally, qualitative research could offer richer insight into student perceptions, barriers to usage, and differential effects across demographic groups, providing valuable data on how students develop critical thinking abilities and apply marketing concepts in practical scenarios, which traditional multiple-choice exams might not fully capture. Incorporating measures such as project-based assessments, case study analyses, or simulations could further complement exam scores by evaluating students’ applied skills and problem-solving capabilities.

In an era where digital tools are increasingly intertwined with pedagogy, higher education institutions must strategically adopt and assess these technologies to foster inclusive, effective, and scalable learning environments.